The Data Liability Trap in Cannabis AI Recommendations

The cannabis retail industry is moving faster than its lawyers can keep up. Every retail location operator I talk to is evaluating some flavor of AI sales associate. Pluggi, Terpli, StrainBrain, BudBot: all promising personalized recommendations, faster checkout, higher basket size. On the surface, it sounds smart. Better UX, conversion lift, competitive edge.

Then you dig into the data problem.

*Nobody told you where that data goes after the sale. That's the liability.*

Why AI Sales associates Look Irresistible

Cannabis retail is brutally thin on margin. Competition is fierce.

Most retail locations operate on 15-25% gross margin, with rent, labor, and compliance eating most of it. An AI recommendation engine that can push higher-margin products, reduce customer decision paralysis, capture first-party data on preferences, and personalize next-visit offers looks like a survival tool, not a luxury.

The pitch is compelling. You integrate with your POS, feed the AI your live inventory, customer history, and terpene profiles. The AI learns. Recommendations get smarter. Revenue climbs.

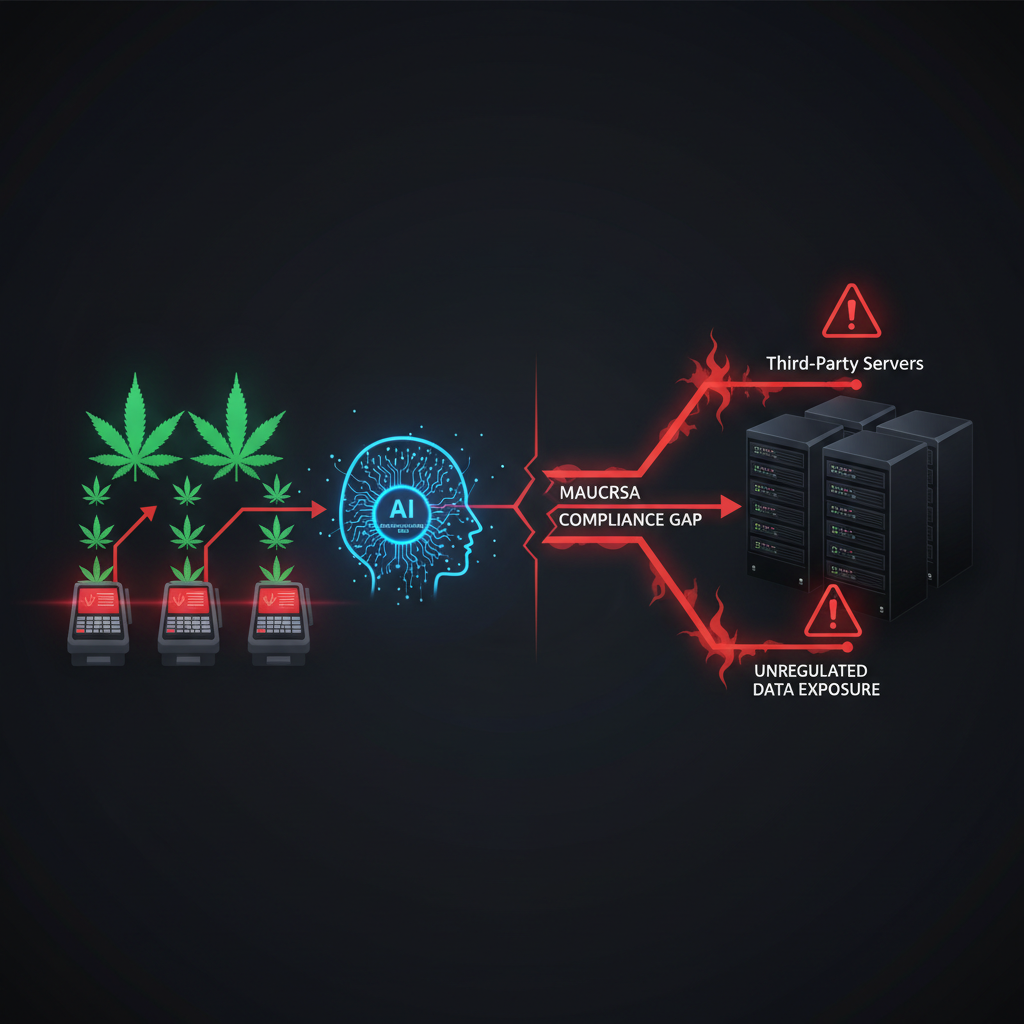

What nobody mentions: the data you're feeding into that engine is now sitting on someone else's servers. And if a customer's purchase history includes their preferred THC dosage, consumption method, and spending habits, you've just created a detailed psychographic profile.

The Regulatory Blind Spot

Here's the thing about cannabis compliance: it's fractured by state, and most state regulations predate AI entirely.

California's Medicinal and Adult-Use Cannabis Regulation and Safety Act (MAUCRSA) has data privacy rules, but they were written assuming transaction logs. Not AI-trained personalization models. Not behavioral data feeding neural networks.

Illinois, Colorado, Massachusetts? Similar story. The frameworks exist, but they don't explicitly contemplate how your customer data behaves inside an AI sales associate system.

Most regulated retailers assume that since they're compliant with POS reporting and seed-to-sale requirements, they're safe. They're not asking: Where does the AI training data live? Who owns the behavioral profiles? What happens if the vendor is acquired? What happens if there's a breach? Are you liable for how the AI uses customer data?

The answer to that last one is almost certainly yes. And you probably didn't read the vendor's terms carefully enough to know.

*Most operators are having this conversation too late.*

The Hidden Compliance Debt

Consider the mechanics of a typical AI sales associate integration.

A customer walks in and interacts with a kiosk or chatbot. The AI asks preference questions (effects wanted, consumption method, THC tolerance, medical history hints). Data is sent to vendor's API. The AI model processes it against your inventory. Recommendations are returned. The purchase happens, and data is logged in vendor's system for model improvement.

At that final step, you've created a customer behavioral profile that exists outside your POS system. You might not have explicit consent to use that data for model training. You definitely don't have clarity on retention, deletion, or third-party access.

If a regulator later audits your retail location and discovers that customer preference data is being retained by a third-party AI vendor without documented customer consent, you're not just looking at a compliance note. You're looking at potential fines, license scrutiny, and reputational damage.

And that's before we consider interstate data flow. If your AI vendor is cloud-based and processes data across state lines, you've just entered a regulatory nightmare. Some states have residency requirements for cannabis customer data. Others don't, but they're watching.

The Conversion Trap Nobody Talks About

Here's the second risk: personalization at scale creates behavioral prediction, and behavioral prediction creates a new liability.

If your AI sales associate learns that a customer tends to buy high-potency products every Friday, and the system starts recommending them preferentially, you're now algorithmically encouraging a consumption pattern. If that customer is later involved in an incident (impaired driving, workplace accident, health issue), and discovery reveals that your recommendation engine was optimized for high-margin, high-potency products, you've created evidence of negligence.

Cannabis retail is already under scrutiny for responsibility around consumption. Unlike alcohol, there's no established "serve responsibly" framework. Regulators are watching how retail locations position potency, frequency, and use cases. An AI system that's secretly optimizing for repeat high-potency sales is a liability time bomb.

What Smart Operators Are Doing

The retail locations handling this correctly are doing five things: explicitly capturing consent before every AI interaction with clear, documented language; minimizing data by collecting only the behavioral signals needed for recommendations, not the full conversation history; securing vendor SLAs with data residency clauses that require customer data to stay within your state with explicit deletion schedules; maintaining audit trails that log exactly what data the AI used for each recommendation; and allowing customers to opt out of personalization entirely without losing service quality.

The operators cutting corners are betting that regulators won't connect the dots for at least 2-3 years. They might be right. But the ones building compliance into the AI strategy from day one are the ones who won't be dealing with reputational and legal fallout when the first enforcement action lands.

Bottom Line

AI sales associates are not a bad idea. They're genuinely useful for customer experience and conversion. But they're only as safe as the data architecture supporting them.

Before you sign a vendor agreement for any AI recommendation engine, ask hard questions about data ownership, retention, interstate flow, and customer consent. Get your legal team involved. Document everything.

The margin boost isn't worth regulatory exposure you don't understand yet.