Compliance Just Became Algorithmic Liability

Retail locations are racing to install AI-powered age verification systems. NIST SP 800-63-4, the government's final identity standard dropped in early 2026, opened the door to AI-based age gating that feels faster, cheaper, and more seamless than traditional ID scans.

But here's what most retail locations don't realize: deploying AI age verification without understanding the compliance layer underneath is like building a fire escape that looks safe but has no inspector approval. The technology works until it doesn't, and when it fails, the liability and regulatory exposure sits entirely with the retailer.

The problem is not that AI age verification is bad. The problem is that retail location operators are adopting it without asking three critical questions. What happens when the AI gets it wrong? What data is being stored and for how long? And which regulator is actually going to hold you accountable?

*The accountability chain just got a lot longer. Most retail locations don't know it yet.*

The Shift From Checkbox Compliance to Accountability

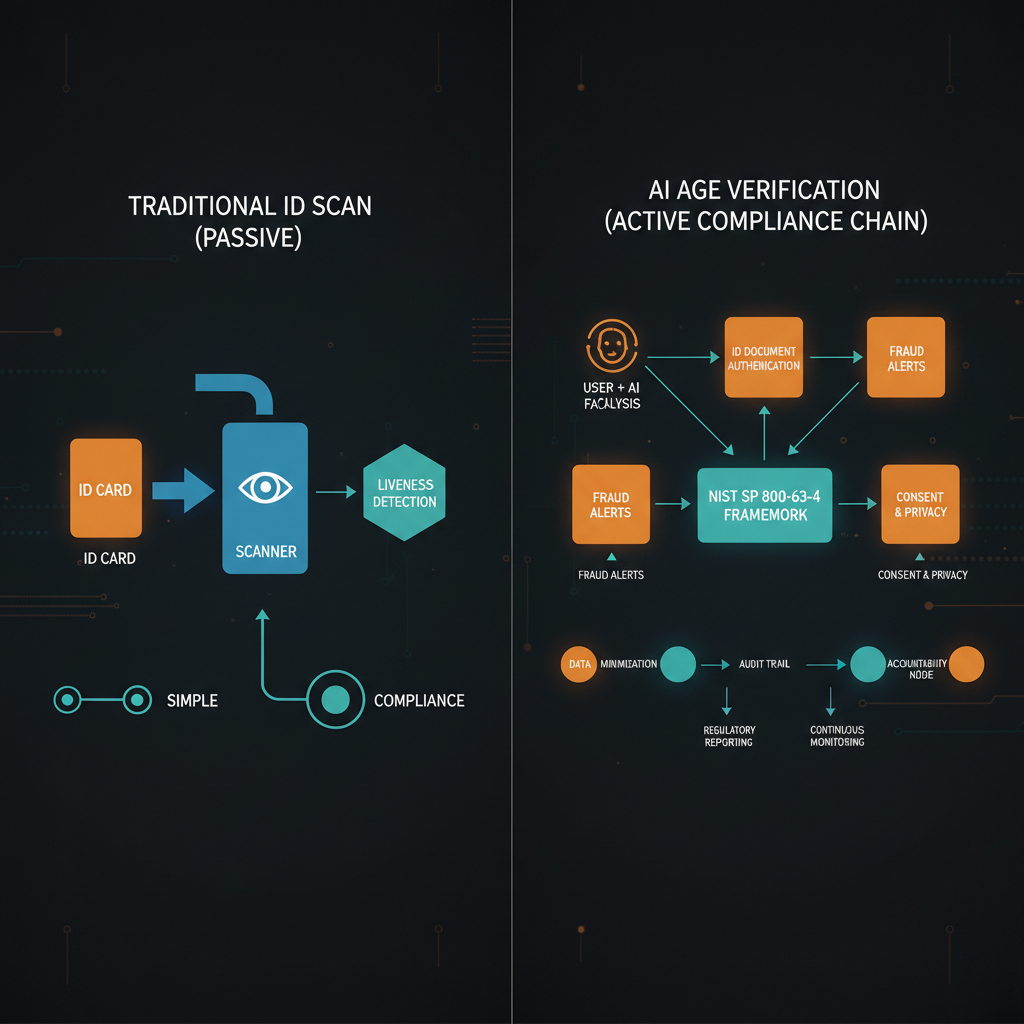

For years, age verification was a checkbox. You scan an ID, the system confirms the person is 21 plus, they buy their product, life goes on. The ID scan was temporary, the liability was clear, and the process was painless.

NIST SP 800-63-4 changed that. The new framework elevated age verification from a passive check to an active identity assurance requirement. It's no longer enough to verify someone is over 21. You now need to prove that your verification method is reliable, auditable, and defensible.

That's where AI comes in. Companies like Blaze, which launched its Herbie AI sales associate in April 2026, are marketing AI age verification as a solution. Scan a face, the AI evaluates age signals, the system makes a decision in milliseconds. No human judgment. No ambiguity. Just automation.

What the marketing doesn't mention is that you've now traded one compliance problem for another. You're no longer liable for human error. You're liable for algorithmic bias, data retention, and proof that your AI system actually works across demographics.

The Algorithmic Liability Problem

AI age verification systems are notoriously bad at this. Studies from MIT and Stanford show that facial recognition age estimation has error rates of 3-5 percent overall, but those errors cluster heavily by race and gender.

An AI system trained primarily on younger, lighter-skinned faces will systematically overestimate the age of older customers and underestimate the age of younger customers of color.

For a cannabis retail location, this creates two opposite liability vectors. If the AI rejects a legal customer, you have a frustrated customer and a potential discrimination claim if the errors cluster by demographics. If the AI approves an underage customer, you now have a regulatory violation, a potential criminal liability case, and license suspension exposure.

Most retail locations installing these systems have not run demographic parity testing on their AI. They don't know if their system fails more often for certain groups. And regulators are just now starting to look at this problem, which means you're the test case.

*When regulators come knocking, this is what they'll want to see.*

The Data Retention Minefield

Age verification in the AI era means storing more than just a simple ID check result. You're storing facial images for the age estimation model to process, the model's confidence score and reasoning, demographic data inferred by the system, timestamps and location data, and the final accept or reject decision.

Regulators care deeply about what you store and for how long. State privacy laws, particularly in Colorado and California, are getting more aggressive about ID image retention. In 2025, Ohio's marijuana card database was breached, exposing purchase history for thousands of customers. State regulators responded by getting stricter about what data can be held and for how long.

The most compliant retail locations are deleting facial images immediately after age verification completes. But that creates a new problem: you can't audit the system retroactively or prove to regulators that the verification was legit if there's ever a dispute. So you're caught between two bad options. Store data and risk fines, or delete data and sacrifice auditability.

The Regulator's Next Move

State cannabis regulators are still figuring out how to enforce age verification standards. Colorado's Marijuana Enforcement Division, which oversees one of the largest legal markets, has not yet issued specific guidance on AI-based age verification. Neither has California's Department of Cannabis Regulation.

That vacuum is dangerous. It means retail locations are deploying AI systems without clear guidance on what regulators are going to audit for or enforce. When regulators finally do move, they'll likely focus on the biggest operators first. And the first enforcement action sets the precedent for everyone else.

The pattern is predictable: regulators see AI age verification, they want to understand how it works, they find algorithmic disparities or data retention failures, they make examples out of early adopters, and then every other retail location has to scramble to reconfigure their systems.

If you're installing AI age verification right now, you're betting that regulators won't care about demographic parity or data retention until later. That's a bet I wouldn't take.

What Responsible Deployment Looks Like

The retail locations that are going to survive the inevitable regulatory tightening are the ones asking hard questions now.

Demographic parity testing: Have you stress-tested your AI age verification system across age groups, skin tones, and genders? If not, you don't know if it's fair.

Data retention documentation: Do you have a written policy on how long you store facial images and verification data? Is it compliant with your state's privacy requirements?

Audit trail: Can you prove to regulators that a specific customer passed age verification on a specific date? And can you do that without storing facial images permanently?

Human override: Does your system have a clear path for human review when the AI is uncertain? And is that review logged?

Vendor accountability: If you're using a third-party AI age verification system, does your contract make them liable for algorithmic bias and regulatory compliance?

Most retail locations are installing AI age verification without answers to any of these questions. They're assuming that if the system works most of the time, that's good enough. It's not.

The Bigger Pattern

This is not unique to age verification. Cannabis retail is automating every customer-facing interaction right now. AI-powered recommendations, chatbots for customer service, algorithmic inventory management, personalized marketing based on purchase history.

Each of these systems has the same underlying problem: they're being deployed at scale without understanding the compliance layer, the failure modes, or what regulators are going to care about when they finally look.

The brands that are going to win in 2026 and beyond are not the ones moving fastest. They're the ones who understand that compliance is not friction , it is a competitive moat.

When regulators finally tighten standards on AI systems, the retail locations that have been building proper audit trails, testing for bias, and documenting data retention are going to be ahead. Everyone else is going to scramble.

If you're deploying AI age verification right now, do it right. Document everything. Test for bias. Understand your data retention obligations. The alternative is being a case study for regulators explaining what not to do.

The age verification trap is real. The question is whether you're going to fall into it or build a bridge over it.